Lawrence Edward Page is one of the most famous businessmen in the world. He is best know as a co-founder of Google and Alphabet. Today he has retired somewhat to a more hands off role as a member of the Board of Directors at Google’s parent company Alphabet. In this article we will focus on how Page made Google the most dominant search engine in the world by doing things right, but in recent years as his role in the company has declined they seem to have forgotten what got them where they are. Then we will conclude by discussing whatever we can dig up about his personal life.

PageRank

PageRank was the original Google algorithm developed by and named after Larry Page in the 1990s. It worked primarily by analyzing html hyperlinks on web pages and ranking pages based on the quality and quantity of links pointing them. This was a ground breaking innovation at a time when other search engines like Yahoo were still using the directory approach. Today most search engines use something similar to PageRank and the ones that don’t are typically not considered search engines at all anymore. PageRank had many advantages that still influence Google search results today, but there were many disadvantages that led Google to develop different algorithms.

PageRank Advantages

PageRank was great because if you could get a small number of backlinks to your site from high quality sources they almost guaranteed that your website would always be successful. We say “almost” because we thought we had enough quality backlinks to guarantee our first site decent Google rankings until Google employees admitted to manually demoting it to the point that it rarely ranks for anything other than its brand name. Google has every right to discount backlinks when they were manufactured or otherwise not earned because doing so is necessary to maintain the quality of their search results, but none of our links fell into that category so there was nothing they could do to demote us within the bounds of their stated policies. All of our backlinks were earned, usually from media coverage of innovative yet controversial ideas. We also did all we could to help Google’s algorithm correctly identify the subjects of our pages by making sure the targeted keywords would be present in the URLs, page titles, meta tags, and structured data. That is all white hat SEO in accordance with Google’s guidelines. We had no reason to think that Google employees would ever manually suppress our site just because they are personally offended by the content. Had they simply let PageRank do its job we would be doing just fine.

PageRank didn’t focus solely on link analysis. It also focused on relevancy to search terms. It would give weight to exact matches and where those matches appear on the page. An exact match in the title, URL, meta description, and on page content would typically result in your page ranking higher than competing pages with fewer matches even if the competing sites had far more backlinks. We used this to our advantage and there was a time when we could outrank mainstream media outlets simply by making sure that our targeted search terms appeared in our page titles, URLs, meta descriptions, and on page content. It would rank higher because our backlink matrix gave us enough authority for PageRank to consider us a quality source of information on niche topics. We were not considered high quality, but good enough for more relevant content on our site to outrank sources usually considered more authoritative.

Everything we did was white hat, but that didn’t stop a Google employee from falsely accusing us of gaming the system in the press earlier this year. We allegedly gamed the system by formatting our pages to rank high in search results for people’s names. The problem with that allegation is that we didn’t game the system at all. We simply made sure our pages adhered to Google’s guidelines by including names of people in page titles, URLs, meta descriptions, structured data, and on page content when the primary topics of the pages are people. The only plausible argument they might make in support of their claim is that most people don’t write content the way we did when they are only writing for other people. People writing just for people might write a title like, “Man arrested for stealing car,” but we would solicit the subject’s name and location before making sure that went in the title. The end result would be something like “John Doe, Anytown, USA: Car Thief.” That is not black hat, it is simply helping Google and users identify the primary subject of the page and something about the subject. It is far more user friendly than a vague description written by a user. Another approach we would use involved adding a recommendation to submission pages that the author put the subject’s name in their title, but all too often users ignore it.

PageRank Disadvantages

PageRank was vulnerable to manipulation. People working in the search engine optimization (SEO) industry figured out early on that they could trick Google into thinking their sites were more authoritative by manufacturing links to themselves. They would manufacture links by paying other sites to link to them, posting links to themselves elsewhere, and in some cases creating networks of websites for the sole purpose linking back to their main sites. Google fought this successfully with algorithm updates and manual penalties. The algorithm updates would identify bad links and demote them automatically. The manual penalties would be handed down by Google employees after looking at links themselves.

Manual penalties are perhaps the earliest example of manual manipulation by Google employees. In many cases they remove entire sites from search results and send the owner a message stating why. When the necessary changes are made the webmaster can submit their site for reconsideration. If a Google employee determines that the site no longer engages in the undesired practice they will probably allow it back into search. This process of removal is categorically different than manipulating rankings themselves. However, Google says “Most issues reported here will result in pages or sites being ranked lower,” which indicates that manual actions include manually manipulating rankings. To their credit these manual actions appear to fall outside the scope of manually manipulating search for political reasons which was context of Google CEO Sundar Pichai’s testimony when he said, “it is not possible for an individual employee or groups of employees to manipulate our search results,” but it still shows that it was possible for employees to manipulate their results when that statement was made.

Panda and Penguin

Google declared war on content farms in 2011 with an algorithm update called Panda which was followed by an update called Penguin in 2012. The Panda update successfully penalized low quality sites that existed for the sole purpose of manufacturing large quantities of content rich with links. Articles marketing was a good example in which authors wanting to boost their PageRank would write articles full of links to themselves and offer them to other sites as free content. Giant directories of articles emerged and the directories themselves amasses so much PageRank that a well worded article could easily rank on the front page of search in competitive industries. This author for example took a job in 2010 helping a local company boost search traffic for some new sites. Since their sites had no link authority and were full of duplicate content I realized I would be better off trying to get content hosted elsewhere linking to them to rank. In furtherance of that goal I would submit articles to places like Enzine Articles with targeted search terms in their titles and links to my client. It was not long before I produced front page results for stuff like “Best [insert product name]” or “Top 10 [insert product name].” Panda put a stop to that and today few articles marketing sites are left standing. Penguin followed up by targeting sites with spammy backlinks. What both of these updates had in common was that they both made search better. Larry Page was Google’s CEO at the time.

RankBrain and BERT

Google began deviating from its course shortly after Sundar Pichai became CEO in 2015. They released a series of algorithm updates that supposedly were intended to improve search results by making their bot think more like a person. The results have been mixed. In some cases users are served with higher quality content consistent with their search, but in others it has become harder for users to find what they are looking for. That is because RankBrain produces synonymous results that are similar but often irrelevant to the user’s query. This was problematic for us because we often found ourselves ranking lower despite our content being an exact match. Often users would be served with synonymous content that RankBrain though was both the same thing and higher quality. To their credit the “higher quality” results were usually much better written and from much reliable sources, but still irrelevant. BERT took things further by trying to figure out the intent of long tail queries, but the end results are still frequently synonymous and not exactly what the user is looking for because exact matches are often ignored.

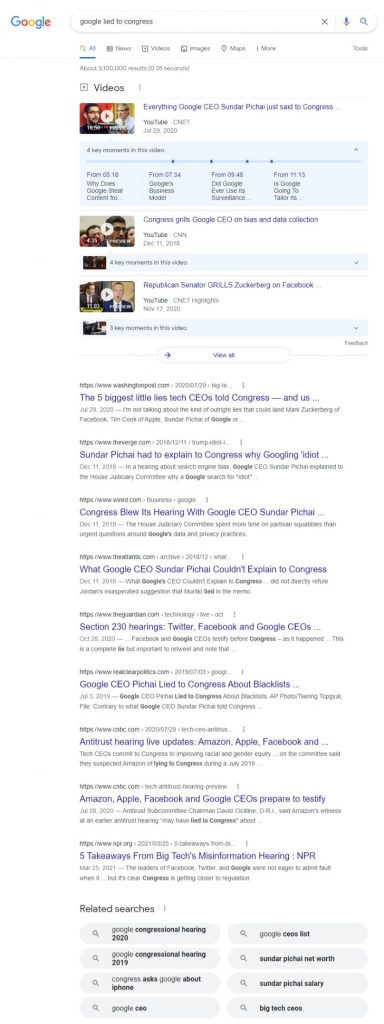

Lets take a look at a query that Google gets wrong all the time either due to flaws with the algorithm or manual manipulation. The query is “Google lied to Congress” without quotes:

As you can see only one result truly matches the search term by stating correctly that “Google lied to Congress.” That result is from Real Clear Politics and is titled, “Google CEO Pichai Lied to Congress About Blacklists.” It looks like an article at first, but if you scroll down you see that it is really a snippet linking to a blog post on Medium by a former Google employee exposing Pichai as a liar. This raises some important questions like, why isn’t the blog post itself outranking the snippet from Real Clear Politics and why is it the only relevant result? Every other result has little or nothing to do with Google lying to Congress. At best they present neutrally worded questions containing like terms. These results are likely to leave Google users thinking that Google did not lie to Congress simply because the top Google results do not appear to support that conclusion.

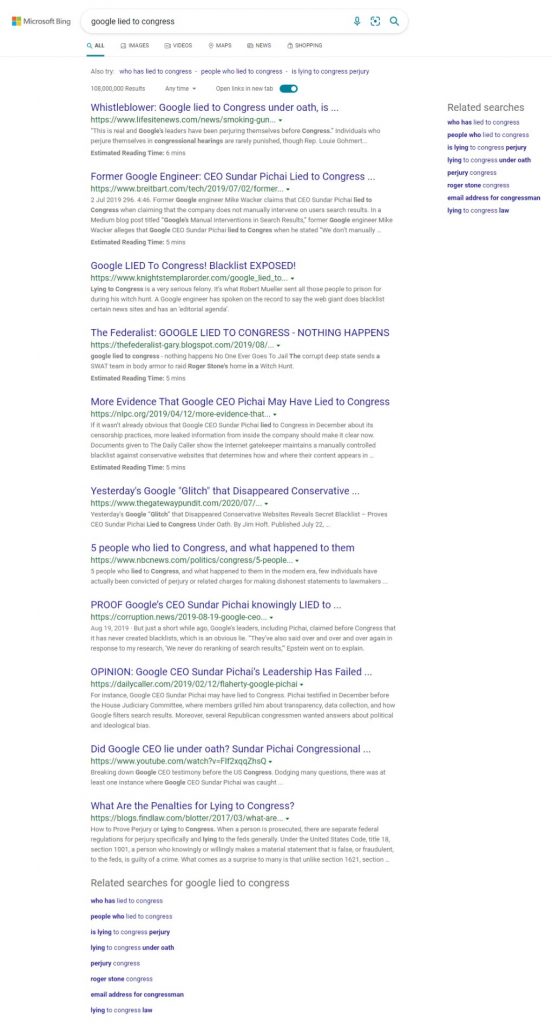

Now compare those irrelevant results to a search engine that still uses a PageRank style algorithm. That search engine is Bing. We love Bing for the most part because almost every site of ours that Google employees manually demoted still rank where they should on Bing. The only downside we have seen with Bing is that they are more likely to de-index sites entirely if they start ranking higher than Bing employees think they deserve and their algorithm can’t do anything about it. For instance, GoogleEmployees.com was number 2 on Bing for “Google Employees” when it was just one under construction page. It outranked many pages run by Google themselves. Then suddenly Bing removed our site. When we appealed to Bing they said it did not meet their quality guidelines, but didn’t say how and have not responded to subsequent attempts to get answers. Today we are hoping that we can add enough quality content for Bing to reconsider the site, but we suspect someone at Google of convincing someone at Bing not to help us. If Bing reconsiders our site we are confident it will rank well when there are relevant matches. Speaking of relevant matches, check out these Bing results for “Google lied to Congress” without quotes.

As you can see almost every result contains evidence of Google lying to Congress. That is because Bing’s algorithm concluded correctly that the user was looking for evidence that Google lied to Congress and no Bing employees manually manipulated the results in Google’s favor. Google would likely explain this difference as their algorithms favoring unbiased higher quality content. Back when Larry Page was running the show this probably would not have happened.

Personal Life

A lot of information regarding Page’s personal life is already in the public realm. The purpose of this section is to organize less widely circulated information and make in universally accessible and userful.

Where He Lives

Page has done a pretty good job scrubbing public records and the internet for personal information about him. The best we have to go on here are old online articles about his address being leaked. That address is 100 Waverley Oaks Court, Palo Alto, California according to Gawker. That property was tied to Page in the press as recently as 2014 when Business Insider included it on a list of the most expensive homes in tech. We have no reason to think that he has since sold the property.

As you can see Google Earth shows the address 174 by default instead of 100 for that location, but we think it is in the right area. If you back out to a satellite view it looks like the place featured in various publications. He put in so much work buying up adjacent lots and tearing stuff down as part of a massive eco-friendly construction project that he likely still lives there. Rarely does a man put in so much work only to move.

Conclusion

Larry Page needs to get his company back on the right track. They got to where they are by helping people find what they are looking for only to turn around and control what they see. They have crossed the line and have become a dangerous threat the American way of life.